Why Your Data Center Should Have Aisle Containment

As I say in my previous blog post, data centers in the United States use a full 1% of the nation’s power so improving efficiencies makes very real sense. In this series of blog posts about HVAC (Heating Venting Air Conditioning) cooling strategies for computers I summarize the main elements you should focus on to make the biggest gains. In this installment I focus on aisle containment.

Aisle containment can bring significant power savings of 10-40% and help you more effectively cool densely packed computer systems, like blade servers.

Thar She Blows

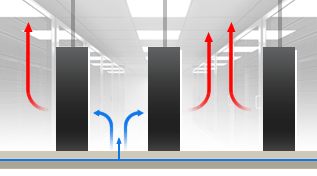

Air flow is an essential element to cooling efficiency strategies. Hot/Cold aisle containment facilitates that by limiting the mixing of hot and cold air so that cold air gets to the servers and hot air is ejected outside.

In a nutshell, data center efficiency gains come from more effectively removing heat. A key vector in attaining those gains relies on the rule that the higher the temperature of the air you send to your CRAH/CRAC (“air handler”) units, the easier it is for the system to eject the heat outside.

Hot and Cold Aisles

In the past, IT gave little concern about airflow and instead just sought to make the entire computer room cold. In other words, they diluted heat by mixing it with very cool air. This strategy was satisfactory when computer rooms were relatively small and scarce. Once data centers became ubiquitous and computer densities in racks exponentially increased, the archaic approach utterly failed to keep the equipment cool while wasting vast amounts of electricity.

As a first step towards improved cooling efficiency, data centers created separate hot and cold aisles where they pointed computer air intakes to the cold aisle and exhausted the heat into the hot aisles. This prevented the hot exhaust air from one row of servers to vent into the cold air intake of the adjacent row and improved cooling capabilities by ensuring that computers pulled cooler air into them.

Hot Air Return Plenums

While creating hot and cold aisles somewhat restricted mixing, the warm air would linger in the hot aisles and get pulled back around to server air intakes. It wasn’t until facilities built hot air return plenums in their ceilings that cooling efficiencies greatly improved. A plenum is an alternative to ducting. Think of it as a large contained pathway for air to flow through. For example, the raised floor in a computer room is a plenum space. In the same way that the raised floor supplies cool air to where it is needed, a hot air return plenum pulls the warm exhaust from computer equipment back to air handlers.

What does this accomplish? Remember that air conditioning is designed to remove heat. Cold air is simply a by-product of heat removal. Let me explain. In old telco clean rooms, the template followed by early data centers, you had to make the entire room cold enough so that heat would get sufficiently diffused by the cold air.

Unfortunately, when an entire room is cold the air that returns to the air handlers is still relatively cold (typically about 72F/22C), which requires them to work very hard, including running compressors (also referred to as “DX cooling”), to make cold air even colder (~55F/13C). Compressors are the single-most power wasting component in traditional cooling systems. Not only do they require vast quantities of energy to run but they cause a big power spike every time they cycle on. Since electrical utilities need to provide enough power to businesses to allow for those spikes they add an extra charge for it called a “demand fee” which is often quite substantial.

Containment

Based on the same logic that controlling air flow improves cooling efficiencies, which includes restricting the mixing of hot and cold air, containment ultimately proved to bring significant wins as well. As the illustration above shows, virtually complete control over airflow was finally achieved with full containment.

With the advent of hot/cold aisles, hot air return plenums, and finally containment, noticeably warmer air returns to the air handlers. A higher return air temperature makes it much easier for air handlers to extract the heat. Mechanical engineers refer to the temperature differential between return air and cooled air as “Delta T (ΔT).” A heat differential of 20F/11C or more brings significant efficiency gains.

As well, since hot air doesn’t mix with the cooled air before entering servers, the air produced by air handlers doesn’t need to be as cold to still be cool enough to keep computers from overheating inside. By 2008, ASHRAE revised their recommendations, stating that computer intake air could be as high as 80F/27C and air returning to air handlers could be as high as 95F/35C. This makes hot aisles slightly uncomfortable for people but it has no negative effect for computers since the exhaust air is effectively pulled back to air handlers. Note that most computer hardware is designed to operate safely at sustained internal temperatures of 140F/60C. When air flow is effectively controlled, the temperature of computer intake air can be quite high, as Dell’s whitepaper illustrates.

Containment Implementation

To effectively control mixing of hot and cold air a data center needs to accomplish the following:

- Restrict air flow directly around server cabinets

- Control air flow at the end of hot (or cold) aisles

This is handled by:

- Constructing a wall from the top front of cabinets up to the ceiling

- Building doors at the end of hot (or cold) aisles

- Installing blanking panels in all empty RMU (Rack Mount Units) slots in cabinets

You can see from this photo in XMission’s colocation room the short barrier wall above cabinets that extends around the contained aisle with sliding doors on the end. Blanking panels are installed in the open gaps of all cabinets. This implementation has proven to be very effective for our needs.

Caveats

Properly implemented, aisle containment improves air flow so effectively that higher density power consumption in racks is possible but there are important caveats that must be kept in mind:

- All hardware in cabinets needs to properly ventilate from the front to the back and have working fans. Unfortunately, networking gear is notorious for ventilating side-to-side or even backwards (back-to-front)

- Cabinets must be setup to maximize efficient air flow. For example, you cannot leave gear in the exhaust pathway of other gear (e.g., external HDs). As well, cabling (for networking and power, primarily) must be properly managed to allow exhaust heat to efficiently ventilate out of the cabinet.

Solutions

Hopefully, you already practice good cable management rather than something resembling a spaghetti monster. Move external HDDs and other peripherals towards the front of your cabinets and remove a blanking panel in front of them so they can get sufficient air flow. Unfortunately, network hardware can be the hardest and potentially most costly problem to address. That said, we have found that in most cases cabinet air flow pulls heat out sufficiently enough that switching gear still remains within safe operating ranges, which are typically higher than servers. If your networking hardware is consistently and significantly over 140F/60C then check to ensure other hardware is not directly ventilating exhaust heat into it. If so, move gear around as needed. You can also open a blanking panel in front of the networking gear to get it some cooler air.

Containment in Conjunction With Other Efficiency Strategies

Ultimately, while aisle containment is nothing more than a refining of the hot/cold aisle implementation, facilities that deploy it see vast gains. It further restricts the mixing of hot and cold air, allowing higher operating temperatures for air handlers, thereby reducing if not altogether removing, the need for power hungry compressors. The hotter return air to the air handlers also makes it much easier to reject the heat outside. In fact, when combined with VFD fans in the air handlers and cooling towers on the roof, compressors are no longer needed for most, if not for the entire, year. Such a strategy is called “water side economizing” and it is quickly becoming popular in new and renovated facilities like XMission’s.

Aisle containment can even be used with bleeding edge “air side economizing,” which simply exhausts air from the hot aisles and brings in filtered outside air to maintain server operating temperatures. While dust and humidity can potentially shorten the lifetime of equipment used in such an environment, many facilities replace their hardware within three years so it might not be an issue considering the vast potential power savings.

Conclusion

As part of a complete HVAC efficiency strategy, aisle containment can help bring an enormous energy savings for data centers to both lower the bottom line and improve their impact on the environment.

By restricting the mixing of hot and cold air, aisle containment efficiently accomplishes the following:

- Transports chilled air from air handlers more directly to server intake

- Pulls hot exhaust from servers back to air handlers

By attaining those two outcomes, you get these efficiency gains:

- Your chilled air can be warmer

- Your fans don’t need to work as hard

The benefits:

- Use less energy to save money and be greener (depending on your current implementation, save 10-40% on your power bill)

- Get more cooling from your infrastructure

- You can have higher power densities in your racks

If you don’t run your own data center, be sure choose one that does employ these power saving efficiency measures.

Grant Sperry works at XMission overseeing operations and colocation. Established back in 1993, XMission was an early Internet pioneer and continues to provide amazing products and personalized service. If you like what we’re doing, contact us to see how we can help your company thrive.

Data Center Cooling Optimizations Bring Big Wins The Salt of XMission

Comments are currently closed.

Interesting article. I’ve read of experiments where they imerse servers in non-conducting liquids like mineral oil for more effective heat transfer and cooling. Any thoughts on that? I’m guessing it’s not ready for prime time yet. 🙂

Peter,

Yes, liquid is a much more effective heat transfer medium than air. At the supercomputing conference held in Salt Lake City just over a year ago (http://sc12.supercomputing.org/) were many vendors displaying various highly efficient technologies involving liquid cooling. I think the key reason why these have yet to be highly developed is because of higher up front costs but moreso that it can be difficult to adapt all server gear (including networking hardware) to work with such systems. Newer cooling strategies like air and water side economizing have also brought gains that make liquid cooling less necessary.

Thanx for the feedback, Grant.

This Dell whitepaper clearly illustrates the benefits of ambient/intake temperatures around 75F: http://i.dell.com/sites/content/business/solutions/whitepapers/en/Documents/dci-Data-Center-Operating-Temperature-Dell-Recommendation.pdf

[…] you still need to get hot air away from critical systems, which is why the most common architectural component of the data center – the raised floor – is only partiall…, says XMission’s Grant Sperry. In too many cases, hot air is allowed to rise over the racks only […]