The Salt of XMission

As system administrators at XMission, we hold to the Three Great Virtues of programmers, immortalized by Larry Wall, the inventor of Perl, but adapted for admins:

- Laziness– The quality that makes you go to great effort to reduce overall energy expenditure. It makes you write labor-saving scripts and configs that other admins will find useful, and document what you wrote so you don’t have to answer so many questions about it.

- Impatience– The anger you feel when the computer is being lazy. This makes you troubleshoot and tune servers and applications that don’t just react to your needs, but actually anticipate them. Or at least pretend to.

- Hubris– Excessive pride, the sort of thing Zeus zaps you for. Also the quality that makes you administer servers that other people won’t want to say bad things about.

Having these three qualities leads us to look for ways that we can make sure XMission is awesome as well as make our system administration team awesome. As such, it seems fitting to put up a post explaining partly just how awesome XMission is. This post is technical in nature, and a bit long. Read on if you want to be awesome too!

The UNIX philosophy

In order to understand this post, let me introduce you to the UNIX philosophy. The UNIX philosophy mentions that a UNIX server should have the following qualities:

- Small is beautiful.

- Make each program do one thing well.

- Build a prototype as soon as possible.

- Choose portability over efficiency.

- Store data in flat text files.

- Use software leverage to your advantage.

- Use shell scripts to increase leverage and portability.

- Avoid captive user interfaces.

- Make every program a filter.

A few of these points play really well together. Specifically, “make each program do one thing well”, “store data in flat text files” and “use software leverage to your advantage”. It would certainly be nice if I had the flexibility to configure many servers simultaneously with a piece of software, rather than the manual work of me logging into each server specifically, configuring it the same way I would many others, one-by-one. Enter configuration management.

Single Server Configuration

Think for a second that you have a Linux server that needs to run a website. Nothing complicated or fancy- just one box doing one thing. So, you need to start planning. Will I need a backend database? What software will the website be written in? PHP? Python? Ruby? Flat HTML? What sort of hardware should I run this on? Should the server be a virtual machine? Will users need external access to the server? If so, will that be provided by SSH? VNC? It’s easy to see that pretty soon, you realize that you have a lot of work to do.

So, you setup a physical server in your server closet or datacenter. You now begin doing the installation of the Linux operating system. Once complete, you setup users and passwords. You install Apache and configure Apache to serve the website. You also configure Apache to serve the site over SSL. Because it’s a site written in Python, you install the necessary Python software, so Apache can serve the site correctly. Because the Python application needs a backend dababase, you then install MySQL. Installing MySQL also means setting up additional users and passwords, creating a database, and granting the proper priveleges. After you are finished, you verify everything looks good by testing the site locally. Finally, you push it out to production.

In a nutshell, you’ve executed the following steps:

- Installed the Linux operating system.

- Setup Linux users and passwords.

- Installed Apache.

- Configured Apache.

- Setup SSL with Apache.

- Installed Python.

- Possibly configured Apache again.

- Installed MySQL.

- Created a MySQL database.

- Setup MySQL users and passwords.

- Configured Apache to talk to MySQL.

- Installed OpenSSH for remote access.

- Setup a firewall to limit access to SSH, Apache and MySQL.

It was a lot of work, but it was worth it. However, that was only one server. What if you needed to setup Apache on multiple servers for shared hosting, such as XMission provides? What if you needed to also setup multiple MySQL database servers, or a single shared MySQL database server for your multiple Apache instances? Not to mention installing the necessary software dependencies to power the website. Then there are usernames and passwords. Hopefully, that is taken care of with LDAP, but possibly not. The more servers you have, the more difficult this becomes.

Introducing Complexity

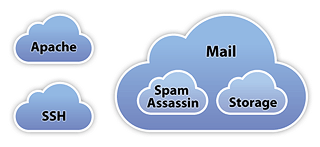

Some companies have many servers in their server closet or datacenter, each with specific responsibilities. Many of them are separated by logical namespaces, such as “internal servers”, “infrastructure servers”, “web servers”, “database servers”, “backup servers”, “mail servers”, and so forth. Although you’ve installed and configured one server to a set of processes, you may need to install and configure additional servers in a different manner. Further, even though you have a “mail cluster”, that cluster could be broken up into mail storage, POP/IMAP servers, spam servers, routing servers, and so forth. So, configuring your mail storage server would require installing and configuring a different set of software than your spam server. Could even require a different set of hardware. Then you may wish to have mail servers for employees that are separate from customers. A great deal of planning goes into this sort af architecture and engineering.

Enter Salt

Here at XMission, we’re a big proponent of buying local. This also includes using and contributing to local software projects, if available, that is a good fit for our business model. Salt Stack is a local software development company based here in Salt Lake City, Utah that specializes in configuration management for UNIX/Linux servers. This is the configuration management utility that we run in our infrastructure.

Salt uses a server/client model, as well as a peer-to-peer model (not discussed in this post). The salt server is called the “salt master”, and the clients are called “salt minions”. Salt can take one of two, or both approaches to communication between the master and the minions:

- The master pushes changes out to each of the minions.

- The minions query the master for changes.

In either case, based on some cofiguration, the minions get their necessary updates. The configuration is in plain text files with a certain markdown syntax known as YAML. Each YAML file contains the necessary instructions of which software to install, which users to setup, how to manage configuration files for Apache, and other servers, and so forth. Futher, Salt can break down the YAML files into logical namespaces that make the most sense for you.

Here at XMission, we’ve broken these namespaces down into “state” and “services”. The “state” namespace describes a broad function that servers will be performing. For example, for virtualization, we have a “kvm” state. For mail servers, we have a “mailcluster” state. There are “shared-hosting”, “cloud”, “dns” and “customer” states as well, among others. The “services” naempsace describes spsecific server software that should be installed and/or configured, such as base “core” software, Apache, MySQL, SSH, Nagios, security cameras, and other services, whether they be internal or external. The trick, is tying them together, because surely Apache will be installed on KVM servers, as an example.

Each logical namespace is broken down into “SLS” files, with the global namespace being called “top.sls”. These are the YAML files that I described. A beginning top.sls file could look something like this:

base:

'*':

- core

- ldap.client

- nagios.client

- munin.client

- backup.client

- ssh

In this case, this configuration in our top.sls file explains that these are the base Salt configurations that we want configured on every server that is setup. This is identified by the “*” in the top.sls Yaml configuration. We want to have: “core” software installed and configured, an LDAP client for user accounts, the servers monitored with Nagios, each server to have graphing functionality with Munin, it backed up, and we want to provide remote access via SSH.

Now, suppose we have a mail cluster of SpamAssassin servers. These will also likely have some sort of common features and software installed. So, in addition to the configuration above, we can add the following to our top.sls file:

base:

'*':

- core

- ldap.client

- nagios.client

- munin.client

- backup.client

- ssh

'spam*.xmission.com':

- mailcluster

- spamassassin

- exim

For any server that has has “spam” as the first part of its subdomain, it will get the “mailcluster” state configuration pulled in, as well ass the “spamassassin” state and the “exim” state. This means that if I were to setup “spam01.xmission.com” with Salt, I would pull in the following services and states:

- Core software (htop, tcpdump, vim, not emacs, ZSH and Git)

- An LDAP client

- A Nagios client

- A Munin client

- A backup client

- An SSH server

- Any software defined in the “mailcluster” state

- The necessary SpamAssasin software

- The Exim mail daemon

This will be applied when I also setup “spam02.xmission.com”, spam03.xmission.com” as well as “spam88.xmission.com”. Hopefully, this can do a great deal of work for me, so I can follow the 3 Great Virtues of a system administrator: laziness, impatience and hubris. In order to get this working for me, we need to look at what these are doing. We won’t look at each one individually (this post is long enough already), so let’s look at what “core” brings in.

So, by specifying “core” in my top.sls previously, that means one of two things: Either I have a subdirectory called “core” with an init.sls file in it, or I have a “core.sls” file in the same directory as my top.sls. Let’s suppose I have a a “core” subdirectory with an “init.sls” configuration file. It would look something like this:

$ ls services/ states/ top.sls $ ls services backup/ bind/ cameras/ core/ exim/ http/ nagios/ iptables/ ldap/ munin/ mysql/ ntp/ ssh/ zfs/ $ ls services/core init.sls

So, I have my “services” and “states” directory. Under “services” is where I defined “core”. Within that subdirectory, I can find my “init.sls”. Looking into that file, this will determine what packages I want installed on every server:

core packages:

pkg:

- installed

- pkgs:

- git-core

- htop

- tcpdump

- tmux

- vim

- zsh

Salt understands that “installed” means that I need to have those packages installed. Further, Salt is smart enough to use the Linux server’s proper package management utility to install the necessary software. It doesn’t matter if this is Debian or CentOS, either apt(8) or yum(8) will be used appropriately.

In order to push out a change, we just need to modify the appropriate YAML configuration file, load the change into the Salt master, then either push the change to the server, or have the server pull the update off of the master.

How XMission Uses Salt

Using Salt can ease a great amount of system administration for us, and it has. We have a large combination of physical and virtal servers, including internal infrastructure servers, customer-facing servers, and others.

First off, when installing servers from scratch, we make sure that the Salt minion is installed by default during the install process. This requires approval by the Salt master to communicate with the minion. After approved, it will bring the minion into proper configuration which is called a “highstate”. Further, we also have a team of multiple administrators that each have a vested interested in setting up and configuring servers. As such, we need a way to update the necessary Salt YAML configuration files, and push the result out to the appropriate server after it has already been built.

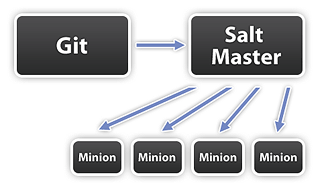

In order to do this cleanly, we use the Git distributed version control software. Certain administrators have the appropriate rights to push changes to the Git repository. An internal IRC bot will notify an internal IRC channel that a change has been pushed, so the other administrators can update their version of the repository, check if an error has been made, and also have a local log of changes in their IRC client.

After the changes have been pushed, the Salt master server will checkout the latest version of the production branch of the Git repository. Finally, one simple call is all that is needed to update the servers:

# salt '*' state.highstate

This makes Salt a remote execution environment as well as a configuration management system. This command tells the Salt master to load in the configuration from the repository, and push out any necessary changes. Of course, if I only needed to update one server, then I could make the following call (updating the SpamAssassin servers):

# salt 'spam*.xmission.com' state.highstate

Each SpamAssassin Salt minion will then get their necessary changes, apply them as necessary, and reload any service as required by the Salt configuration.

Conclusion

Salt has been a great addition to our day-to-day administration. While there is always work to be done, it’s nice knowing that I check out the latest updates in Git, make the necessary Salt configuration changes, push to the Git server, update the Salt master, then push the updates to the minions. This is so much easier than logging into each server individually, and making the change, which is time consuming and prone to amplified human error.

Thanks to Salt for helping make XMission more awesome!

Why Your Data Center Should Have Aisle Containment Data Center Sustainability Improvements Via Free Cooling

Comments are currently closed.